The landscape of scientific discovery is currently undergoing a structural shift that renders traditional laboratory timelines obsolete. Researchers are no longer solely dependent on the iterative grind of physical experimentation, as generative artificial intelligence has transitioned from a tool for creative synthesis to a primary engine for empirical research. By leveraging transformer architectures—the same technology underpinning consumer-facing chatbots—scientists are now navigating high-dimensional vector spaces to predict complex physical interactions with unprecedented speed. (It is a quiet revolution happening in compute clusters rather than test tubes.)

At the core of this transition lies the ability of large models to identify latent patterns in massive, multi-dimensional datasets. In fields like protein folding and material science, where the sheer volume of potential molecular configurations once required decades of computational brute force, AI now identifies promising leads in a fraction of that time. Current industry metrics indicate that the lead optimization phase of drug discovery, a historically stagnant bottleneck, is witnessing a 30 to 40 percent reduction in duration. This represents a tangible acceleration of medical progress, turning years of chemical screening into a streamlined digital simulation process.

Decoding the Black Box Problem

Despite the technical efficacy of these systems, the scientific community is grappling with a significant epistemological hurdle: the “black box” problem. In traditional science, a methodology must be traceable, reproducible, and transparent. However, deep learning models often arrive at precise, accurate conclusions without an interpretable path or a clear chain of logic that a human researcher can audit. This creates a friction point between the speed of machine-generated insights and the rigors of the scientific method. If the process remains a mystery, is the discovery inherently compromised? (Most researchers argue yes, which is why the push for explainable AI is becoming as critical as the push for raw performance.)

Human Centered AI in the Laboratory

Prominent figures in the field, including Fei-Fei Li, have advocated for a focus on “human-centered AI.” This philosophy posits that as AI integrates into the foundation of biology and physics, the priority must shift toward safety and verifiability. The machine should act as a force multiplier for human intellect, not a blind authority that directs research. By keeping the human element in the loop, the scientific community ensures that machine-led discoveries remain anchored to physical reality and ethical standards. This is the necessary counterbalance to the rapid digitization of chemistry and biology.

Transforming Material Science

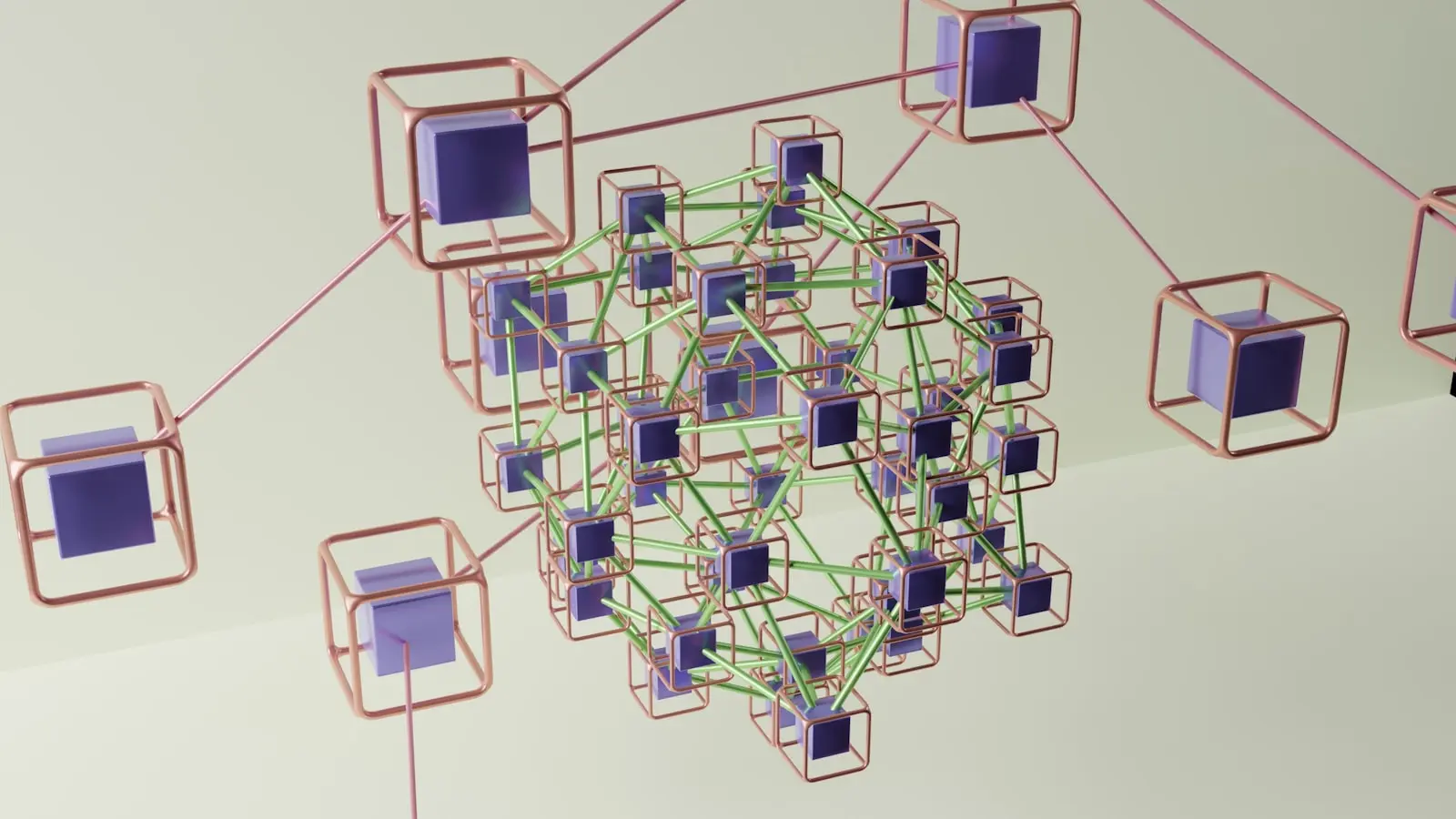

Beyond drug development, the impact on material science is equally profound. By simulating chemical reactions within virtual environments, researchers can screen thousands of potential material properties before attempting a single physical synthesis. This allows for the discovery of new alloys, superconductors, and energy-dense battery materials that might have been ignored due to the prohibitive cost of physical testing. The workflow is changing fundamentally:

- Data Integration: Aggregating historical experimental data into unified, machine-readable formats.

- Predictive Modeling: Utilizing transformers to map molecular interactions in high-dimensional space.

- Virtual Screening: Eliminating non-viable candidates before they ever reach the lab bench.

- Physical Validation: Focusing expensive resources only on the high-probability outputs generated by the models.

The Future of Scientific Infrastructure

We are witnessing the emergence of a new scientific infrastructure where compute power is treated with the same necessity as water or electricity. The ability to simulate physical reality is becoming the primary competitive advantage for pharmaceutical companies and research institutions alike. While the current gains in efficiency are notable, the long-term potential lies in the ability to generate entirely new classes of knowledge that human intuition might never have reached on its own. (The challenge, of course, is ensuring that our reliance on these digital tools does not degrade our fundamental understanding of the physical world they simulate.)

As the technology matures, the definition of a “researcher” will likely broaden to include those who can best orchestrate these complex systems. The future belongs not just to those who can conduct experiments, but to those who can frame the right questions for the machines to solve. The era of exhaustive, linear experimentation is drawing to a close; the era of algorithmic discovery has begun.